Amazon Bedrock is the centerpiece of the AWS Certified Generative AI Developer - Professional (AIP-C01) exam. As AWS's fully managed foundation model service, Bedrock provides access to leading AI models and production-ready features that form the foundation of enterprise GenAI applications. This comprehensive guide covers every Bedrock feature you need to master for exam success.

Exam Weight: 80%+ Bedrock Content

Amazon Bedrock concepts appear across all five AIP-C01 exam domains. Questions frequently test your understanding of Knowledge Bases, Agents, Guardrails, model selection, and the Converse API. This guide focuses exclusively on Bedrock features critical to passing the exam.

What is Amazon Bedrock?

Amazon Bedrock is a fully managed service that provides API access to foundation models (FMs) from Amazon and leading AI companies. Unlike self-hosted models, Bedrock eliminates infrastructure management while providing enterprise-grade security, privacy, and compliance.

Key Value Propositions:

- No Infrastructure Management: Models run on AWS-managed infrastructure

- Private & Secure: Your data never leaves your AWS account, never trains models

- Multiple Providers: Access Claude, Llama, Titan, Mistral, and more through unified APIs

- Enterprise Features: Built-in guardrails, knowledge bases, agents, and fine-tuning

- Pay-Per-Use: Token-based pricing with no upfront commitments

Exam Quick Facts

Preparing for AIP-C01? Practice with 455+ exam questions

Foundation Models in Bedrock

Understanding model selection is critical for AIP-C01. The exam tests your ability to choose appropriate models based on use case, cost, performance, and capability requirements.

Core Topics

- •Nova Micro: Text-only, lowest latency, cost-optimized

- •Nova Lite: Multimodal (text + image), fast processing

- •Nova Pro: Multimodal with complex reasoning

- •Nova Premier: Most capable, coming Q1 2025

- •All Nova models trained on responsible AI principles

- •Native function calling and tool use support

- •Up to 300K token context windows

Skills Tested

Example Question Topics

- Which Nova model is best for real-time customer support chatbots?

- When should you choose Nova Pro over Nova Lite for document analysis?

Exam Model Selection Strategy

Quick Decision Framework:

- Lowest cost, simple tasks: Amazon Titan Text Lite, Claude Haiku 4.5, Nova Micro

- Best for coding & agents: Claude Sonnet 4.5, Nova Pro

- Frontier reasoning: Claude Opus 4.5 (with extended thinking)

- Open-source requirement: Llama 3.1/3.2

- Embeddings for RAG: Titan Embeddings V2, Cohere Embed

- Image generation: Titan Image Generator, Stability AI

Bedrock Converse API

The Converse API is Bedrock's unified interface for all chat-based model interactions. It provides a consistent request/response format across different foundation models, simplifying multi-model applications.

Key Features:

- Unified Interface: Same API structure for Claude, Llama, Titan, Mistral

- Message History: Built-in conversation context management

- Tool Use: Native function calling across supported models

- Streaming: Real-time response streaming for low-latency UX

- System Prompts: Consistent system message handling

Converse API vs InvokeModel API

| Feature | Converse API | InvokeModel API |

|---|---|---|

| Interface | Unified across models | Model-specific formats |

| Conversation History | Built-in management | Manual implementation |

| Tool Use | Native support | Model-dependent |

| Streaming | ConverseStream | InvokeModelWithResponseStream |

| Best For | Chat applications | Custom integrations |

| Exam Focus | HIGH | Medium |

# Converse API Example (Exam-relevant pattern)

import boto3

bedrock = boto3.client('bedrock-runtime')

response = bedrock.converse(

modelId='anthropic.claude-sonnet-4-5-20250101-v1:0',

messages=[

{

'role': 'user',

'content': [{'text': 'Explain RAG architecture in 3 sentences.'}]

}

],

system=[{'text': 'You are an AWS solutions architect.'}],

inferenceConfig={

'maxTokens': 500,

'temperature': 0.7,

'topP': 0.9

}

)

print(response['output']['message']['content'][0]['text'])

Exam Focus: Tool Use with Converse

The AIP-C01 exam frequently tests tool use (function calling) through the Converse API. Understand how to define tools, handle tool use requests, and return tool results. This is critical for Bedrock Agents implementation.

Amazon Bedrock Knowledge Bases

Knowledge Bases enable Retrieval-Augmented Generation (RAG) by connecting foundation models to your organization's data. This is the most heavily tested Bedrock feature on the AIP-C01 exam.

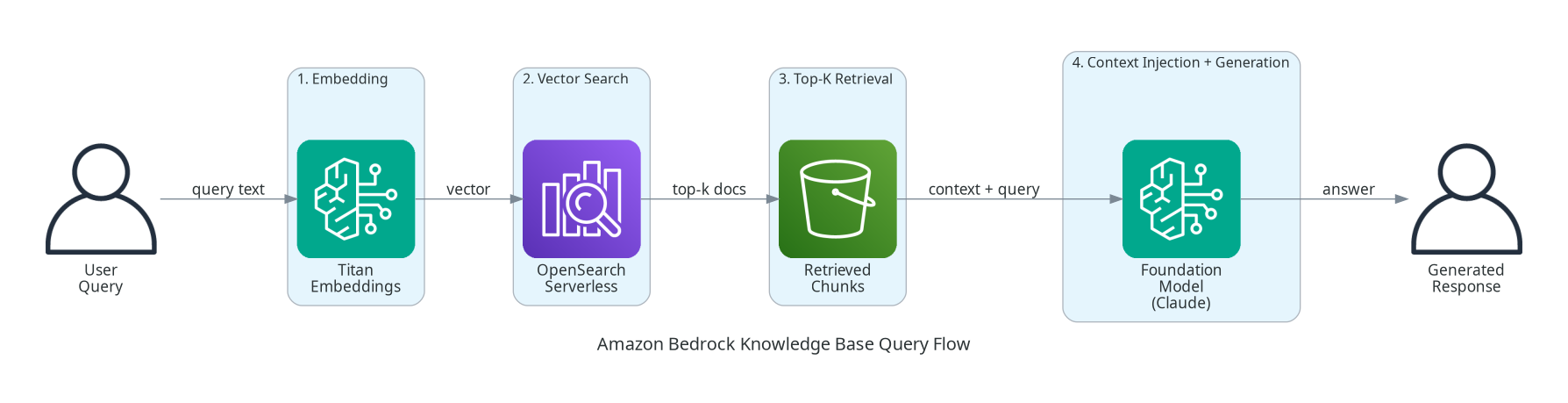

Architecture Overview:

- Data Sources: S3 buckets, Confluence, SharePoint, Salesforce, web crawlers

- Chunking: Documents split into manageable pieces

- Embedding: Chunks converted to vectors using embedding models

- Vector Store: Vectors stored in OpenSearch Serverless, Aurora pgvector, Pinecone, or S3 Vectors

- Retrieval: Semantic search finds relevant chunks at query time

- Generation: Foundation model generates response using retrieved context

Core Topics

- •Amazon S3 (primary source)

- •Confluence Cloud and Data Center

- •SharePoint Online

- •Salesforce

- •Web Crawler for public websites

- •Custom data connectors

- •Supported formats: PDF, TXT, MD, HTML, DOC, CSV

Skills Tested

Example Question Topics

- How do you configure a Knowledge Base to sync documents from both S3 and Confluence?

- What IAM permissions are required for the Knowledge Base service role to access S3?

NEW: S3 Vectors (2025)

S3 Vectors eliminates the need for a separate vector database. Vectors are stored directly in S3 with built-in indexing. Benefits:

- Zero vector database management

- Automatic scaling

- Lower cost for moderate workloads

- Native S3 security and compliance

- Best for applications under 1M vectors

Knowledge Base Query Flow:

Knowledge Base Retrieval Strategies

| Strategy | Use Case | Pros | Cons |

|---|---|---|---|

| Semantic Search | General Q&A | High relevance | May miss exact matches |

| Hybrid Search | Mixed queries | Best of both | More complex |

| Metadata Filtering | Structured data | Precise control | Requires metadata |

| Multi-query | Complex questions | Better coverage | Higher latency |

Amazon Bedrock Agents

Bedrock Agents enable autonomous AI systems that can plan, execute actions, and interact with external tools and APIs. This is the second most tested feature on AIP-C01.

Agent Capabilities:

- Autonomous Planning: Break complex tasks into steps

- Tool Invocation: Call Lambda functions, APIs, Knowledge Bases

- Multi-turn Conversations: Maintain context across interactions

- Action Groups: Define available tools and their parameters

- Knowledge Base Integration: Ground responses in enterprise data

Core Topics

- •Foundation model selection for agents

- •Action groups and Lambda functions

- •OpenAPI schema definitions

- •Agent instructions and system prompts

- •Session management and state

- •Return of control patterns

- •Agent aliases and versioning

Skills Tested

Example Question Topics

- How do you configure an agent to query a database, process results, and send email notifications?

- What is the difference between return of control and standard agent execution?

Agent Components:

- Foundation Model: Powers reasoning and planning (Claude recommended)

- Instructions: System prompt defining agent behavior

- Action Groups: Tools the agent can invoke

- Knowledge Bases: Data sources for grounded responses

- Guardrails: Safety controls for inputs and outputs

# Agent Invocation Pattern

response = bedrock_agent_runtime.invoke_agent(

agentId='AGENT_ID',

agentAliasId='ALIAS_ID',

sessionId='unique-session-id',

inputText='Book a flight from NYC to LAX for next Friday'

)

# Handle streaming response

for event in response['completion']:

if 'chunk' in event:

print(event['chunk']['bytes'].decode())

Exam Trap: Agent vs Knowledge Base

Know when to use Agents vs Knowledge Bases alone:

- Knowledge Base only: Simple Q&A, document search, no actions needed

- Agent + Knowledge Base: Complex tasks requiring actions + data retrieval

- Agent without KB: Action execution without enterprise data grounding

Amazon Bedrock Guardrails

Guardrails provide content filtering, topic restrictions, and safety controls for GenAI applications. This is heavily tested in Domain 3 (AI Safety, Security, and Governance - 20%).

Core Topics

- •Content filters: Hate, violence, sexual, misconduct

- •Denied topics: Block specific subjects

- •Word filters: Explicit word blocking

- •PII detection and redaction

- •Regex patterns for custom filtering

- •Contextual grounding: Reduce hallucinations

- •Input and output guardrails

Skills Tested

Example Question Topics

- How do you configure guardrails to detect and redact credit card numbers in agent responses?

- A healthcare app needs to block discussions of drug prices. How should guardrails be configured?

Guardrail Configuration Layers:

| Layer | Purpose | Configuration |

|---|---|---|

| Content Filters | Block harmful content | Set filter strengths (LOW/MEDIUM/HIGH) |

| Denied Topics | Block specific subjects | Define topic descriptions |

| Word Filters | Block explicit words | Add word lists |

| PII Filters | Protect sensitive data | Enable PII types, set action (BLOCK/ANONYMIZE) |

| Contextual Grounding | Reduce hallucinations | Set grounding threshold |

PII Filter Actions

| Action | Behavior | Use Case |

|---|---|---|

| BLOCK | Reject entire request/response | Strict compliance environments |

| ANONYMIZE | Replace PII with placeholders | Allow processing without exposure |

| Allow | Pass through unchanged | Non-sensitive applications |

# Creating a Guardrail

guardrail_response = bedrock.create_guardrail(

name='customer-support-guardrail',

description='Guardrail for customer support chatbot',

contentPolicyConfig={

'filtersConfig': [

{'type': 'HATE', 'inputStrength': 'HIGH', 'outputStrength': 'HIGH'},

{'type': 'VIOLENCE', 'inputStrength': 'HIGH', 'outputStrength': 'HIGH'}

]

},

topicPolicyConfig={

'topicsConfig': [

{

'name': 'competitor-discussion',

'definition': 'Discussion about competitor products or services',

'type': 'DENY'

}

]

},

sensitiveInformationPolicyConfig={

'piiEntitiesConfig': [

{'type': 'CREDIT_DEBIT_CARD_NUMBER', 'action': 'ANONYMIZE'},

{'type': 'US_SOCIAL_SECURITY_NUMBER', 'action': 'BLOCK'}

]

}

)

Exam Strategy: Guardrails Application

Guardrails can be applied at three levels:

- Model invocation: Direct

InvokeModelorConversecalls - Knowledge Base queries: Applied during

RetrieveAndGenerate - Agent execution: Applied to agent inputs and outputs

The exam tests understanding of where to apply guardrails in complex architectures.

Amazon Bedrock Flows

Bedrock Flows (previously called Prompt Flows) enable visual orchestration of multi-step GenAI workflows without code.

Flow Components:

- Input Node: Receives user input

- Prompt Node: Sends prompts to foundation models

- Knowledge Base Node: Queries Knowledge Bases

- Condition Node: Branching logic

- Lambda Node: Custom code execution

- Output Node: Returns final response

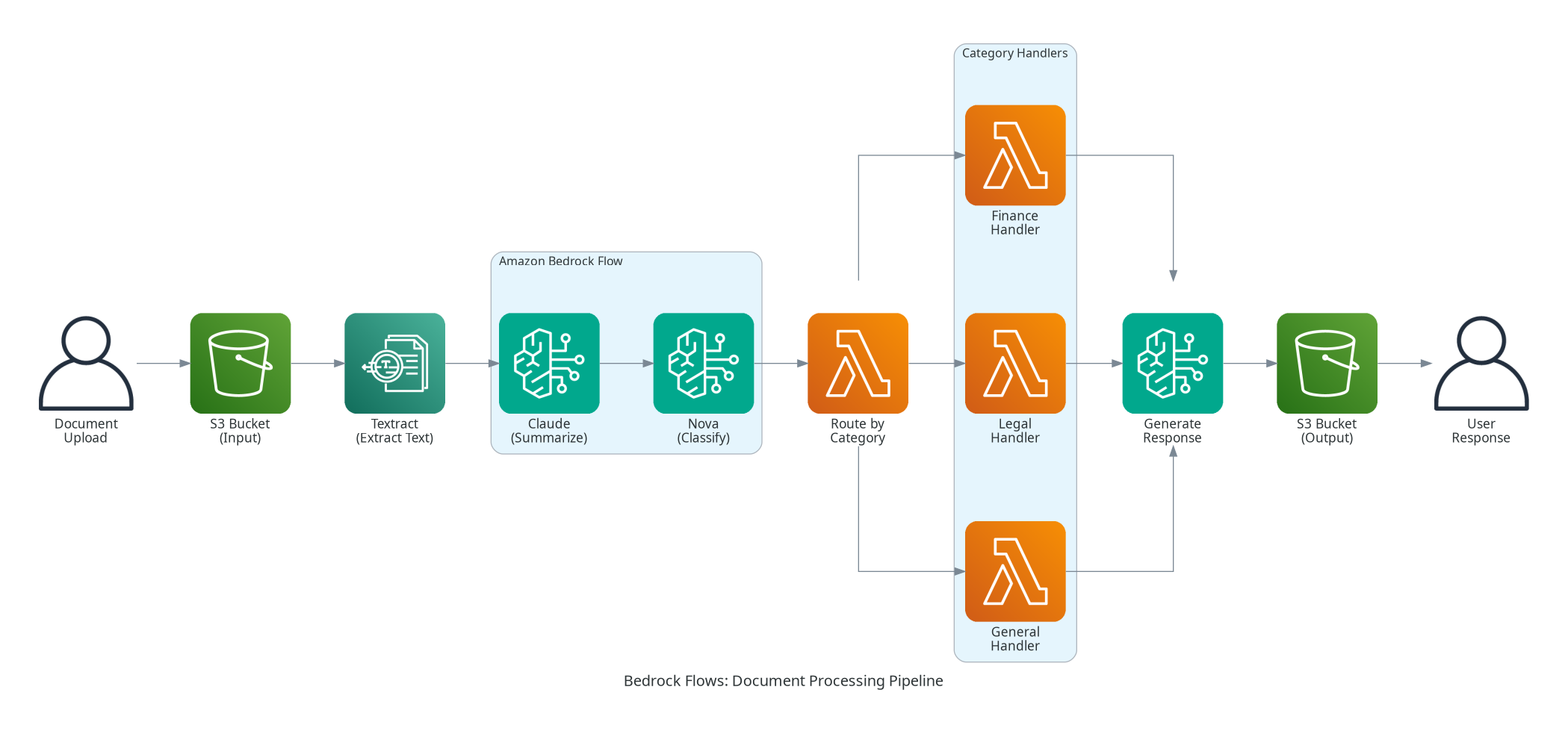

Example Flow: Document Processing Pipeline

This pipeline shows a typical document processing workflow:

- User uploads document to S3

- Textract extracts text from PDFs, images, or scanned documents

- Claude summarizes the extracted content

- Nova classifies the document by category

- Lambda routes to specialized handlers based on classification

- Bedrock generates final response

- Output stored in S3 and returned to user

Master These Concepts with Practice

Our AIP-C01 practice bundle includes:

- 7 full practice exams (455+ questions)

- Detailed explanations for every answer

- Domain-by-domain performance tracking

30-day money-back guarantee

Model Customization and Fine-Tuning

While Bedrock provides powerful base models, some use cases require customization. The exam tests your understanding of when and how to customize models.

Bedrock Customization Options

| Method | Use Case | Data Required | Cost |

|---|---|---|---|

| Continued Pre-training | Domain adaptation | Large unlabeled corpus | High |

| Fine-tuning | Task specialization | Labeled examples | Medium |

| Prompt Engineering | Quick optimization | No training data | Low |

| RAG (Knowledge Bases) | Enterprise data | Documents | Low-Medium |

Fine-Tuning Considerations:

- Only available for select models (Titan, some Llama variants)

- Requires labeled training data in specific formats

- Creates a custom model version in your account

- Higher inference costs than base models

- Data never leaves your account

Exam Focus: Fine-Tuning vs RAG

Choose Fine-Tuning when:

- Need to change model behavior/style

- Have consistent task-specific patterns

- Want to encode domain knowledge in weights

Choose RAG when:

- Data changes frequently

- Need citations/source attribution

- Want to keep model general-purpose

- Have limited labeled data

Bedrock Pricing and Cost Optimization

Cost optimization is tested in Domain 4 (Operational Efficiency - 12%). Understanding Bedrock pricing is essential.

Pricing Models:

| Model | On-Demand | Provisioned Throughput |

|---|---|---|

| Claude Sonnet 4.5 | $3.00/M input, $15.00/M output | Monthly commitment |

| Claude Haiku 4.5 | $0.80/M input, $4.00/M output | Monthly commitment |

| Titan Text | $0.15/M input, $0.20/M output | Monthly commitment |

| Nova Micro | $0.035/M input, $0.14/M output | Monthly commitment |

Cost Optimization Best Practices:

- Start with smaller models: Use Haiku/Nova Micro for simple tasks

- Implement caching: Cache common queries and responses

- Optimize prompts: Shorter prompts = lower costs

- Use batch inference: For non-real-time workloads

- Monitor usage: Set CloudWatch alarms on token consumption

- Provisioned throughput: For predictable high-volume workloads

Bedrock Security and Compliance

Security is tested across multiple domains, particularly Domain 3 (20%) and throughout integration scenarios.

IAM Policy Example:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"bedrock:InvokeModel",

"bedrock:InvokeModelWithResponseStream"

],

"Resource": [

"arn:aws:bedrock:us-east-1::foundation-model/anthropic.claude-3-haiku*",

"arn:aws:bedrock:us-east-1::foundation-model/anthropic.claude-3-sonnet*"

]

}

]

}

Exam Security Scenarios

Common security scenarios tested:

- Restricting model access by IAM policies

- Enabling VPC endpoints for private Bedrock access

- Configuring CloudTrail for model invocation auditing

- Encrypting Knowledge Base data with customer-managed KMS keys

- Implementing guardrails for PII protection

Monitoring and Troubleshooting

Domain 5 (Testing, Validation, and Troubleshooting - 11%) tests your ability to monitor and debug Bedrock applications.

Key CloudWatch Metrics:

InvocationLatency: Model response timeInvocationClientErrors: 4xx errors (client issues)InvocationServerErrors: 5xx errors (service issues)InvocationThrottles: Rate limit hitsInputTokenCount: Tokens consumed in requestsOutputTokenCount: Tokens generated in responses

Common Issues and Solutions:

| Issue | Cause | Solution |

|---|---|---|

| Irrelevant RAG responses | Poor chunking | Adjust chunk size, add overlap |

| Agent tool failures | Schema mismatch | Validate OpenAPI schema |

| High latency | Model selection | Use faster model variant |

| Throttling errors | Rate limits | Implement retry with backoff |

| Guardrail blocks valid content | Overly strict config | Tune filter thresholds |

Exam Preparation Checklist

Bedrock Mastery Checklist for AIP-C01

0/11 completedFrequently Asked Questions

Next Steps

After mastering Bedrock fundamentals, continue your AIP-C01 preparation:

- Complete our AIP-C01 Complete Guide for full exam coverage

- Build hands-on projects using Knowledge Bases, Agents, and Guardrails

- Take Preporato practice exams to test your Bedrock knowledge

- Review AWS documentation for latest Bedrock features and updates

Ready to Pass AIP-C01?

Preporato offers 7 full-length practice exams specifically designed for the AWS Certified Generative AI Developer - Professional exam. Our questions cover all Bedrock features with detailed explanations referencing official AWS documentation. 92% of our students pass on their first attempt.

Sources

- Amazon Bedrock Official Documentation

- Amazon Bedrock Knowledge Bases User Guide

- Amazon Bedrock Agents Developer Guide

- Amazon Bedrock Guardrails

- Amazon Bedrock Converse API Reference

- AWS Certified Generative AI Developer - Professional Exam Guide

- Amazon Nova Foundation Models

- Amazon Bedrock Pricing

Last updated: February 3, 2026

Ready to Pass the AIP-C01 Exam?

Join thousands who passed with Preporato practice tests